The Problem

One of America’s largest consumer electronics chains with over 1,000 retail stores across the US, Mexico and Canada, prepares for a network-wide Wi-Fi refresh. To help their engineers fairly evaluate vendor options and define the next generation Wi-Fi standard for a typical store, they engaged 7SIGNAL.

In 2021 the six-year old Wi-Fi networks in most of the company’s stores will be up for review, and many of them will need to be replaced. When the total cost of ownership of a new nation-wide network can exceed $10 million, doing a comparison using an independent monitoring solution to choose a Wi-Fi solution with the best overall value, is a good idea.

Should they stay with their current provider, or is a change justified? Switching vendors is not to be taken lightly. There are many hidden costs in supporting multiple vendors during the transition from one to the other. To justify switching vendors, the company needed to see a 25-30% price / performance advantage between the incumbent, and the best alternative.

In the past, stores typically had 12 to 16 access points depending on the store layout. Using today’s latest equipment, what is the optimum number of access points, radios, streams, features, architecture

and placement, to satisfy certain minimum user performance thresholds?

As the enterprise Wi-Fi market has turned toward cloud management, the number of configuration options in terms of streams, radios, and management has multiplied. This makes it hard to compare like-for-like pricing, performance and functionality between vendor offerings.

With this level of investment the company needed to know what they would be getting. Even though evaluating WLAN performance is insanely complicated, they were determined to find a fair way to do a real-world "bake off" of sorts, so they had the full price / performance picture.

This is the strategic plan they devised:

The Solution

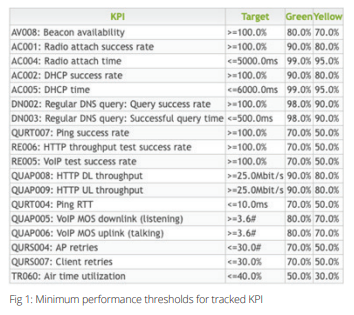

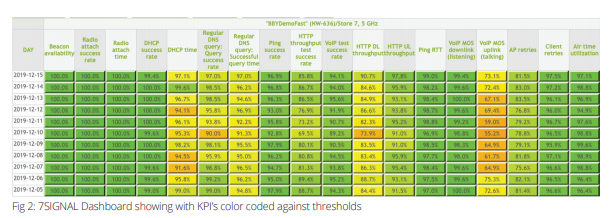

They invited a team from each of three vendors namely: Mist, Aruba, Cisco and Extreme Networks to: “… give it their best shot”, said the CTO of the retail giant. Each vendor was given two typical stores and asked to optimize their configuration to satisfy a checklist of minimum performance thresholds across a range of KPIs (Fig 1). The KPIs for each network were tracked in detail over the period by the 7SIGNAL Wireless Experience Monitoring platform and displayed in a custom dashboard.

With 7SIGNAL providing unbiased real-world benchmark data they trusted, this independent evaluator approach, connected the dots between the solutions proposals and the real-world user experience they could expect. Best of all, it put the onus on the vendors to do almost all of the work.

Each team was given two weeks to install and tune their network in each store. During this time, they had access to the 7SIGNAL dashboard (Fig 2) and they could talk to 7SIGNAL engineers to help them use it, interpret the results and discuss tactics for optimizing their own configuration. They could measure the impact of every tweak and compare their results with their own recent benchmark data or historical data 7SIGNAL had already captured for the WiFi network that was previously installed in the same store.

Each network had Sapphire Eye® sensors installed and Mobile Eye® agents deployed onto demo smartphones and laptops. Sapphire Eye sensors run a continuous battery of active and passive tests to

provide a 24/7 view from every angle of the network. While Mobile Eye agents contribute more insights

into the experience for users moving around the store.

"It was exciting to see the teams pull performance improvements out of a hat, one after the other."

The Results

No vendor was immune to unexpected anomalies. All vendors needed to make numerous adjustments based on 7SIGNAL analytics, to bit-by-bit inch-up their baseline results. “When it comes to Wi-Fi performance, 7SIGNAL are the guys that the smartest guys in the room turn to when they need help,” added the CTO. “It was exciting to watch the teams as they made one performance improvement after another, based on the 7SIGNAL dashboards.”

During the setup phase, each vendor made substantial improvements to their own solution. “I think we were all surprised how much we could improve when the KPI’s are presented in a meaningful way,” said one Senior Field Engineer. "In one of the networks they increased download throughput by as much as 176% over the first attempt."

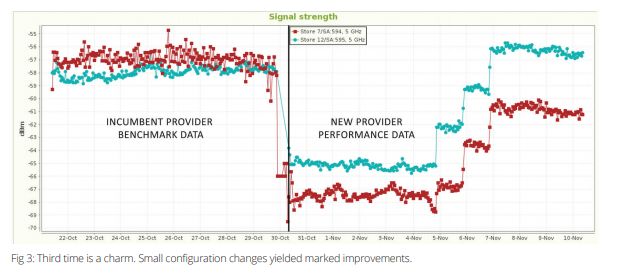

On average the performance increase made over the period for six networks was 83%. The chart below shows signal strength for the 5Ghz network in each of two stores, improving as three sets of changes are made. One of the networks ends up beating the original benchmark while the other still falls short.

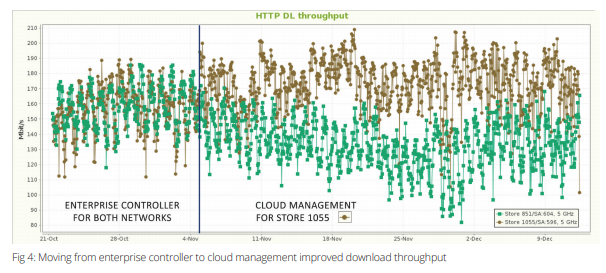

Another of the participants counterintuitively saw a marked improvement in downlink throughput performance when they changed the configuration from an enterprise controller to cloud management.

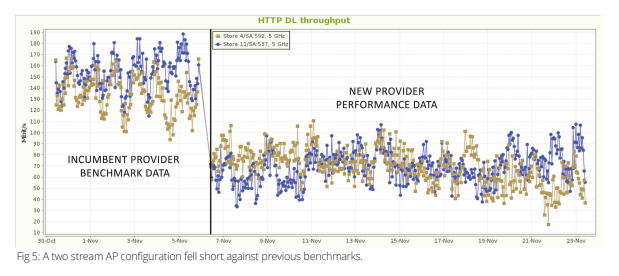

The participant highlighted in Fig 5 may have shot themselves in the foot, by opting for 2-Stream APs. OR maybe they were the smart ones. Two streams should mean a price advantage. And 3-streams could be argued as a waste of money, if 2-streams already exceeds the 25 Mbps threshold by about 200%.

We don’t know how price, performance and other criteria will ultimately be weighed against one another. What is clear is this strategic benchmarking approach did successfully deliver the like-for-like performance comparison they hoped for.

"It was exciting to see the teams pull performance improvements out of a hat, one after the other."